AI Feedback Loops: The Ouroboros Effect Behind Model Collapse

Artificial intelligence is starting to face a strange problem: it is learning from itself.

As more and more articles, images, code snippets, summaries, reports, and datasets generated by AI start appearing online, future AI systems could be trained on a growing mountain of stuff made by machines. And that creates a funny feedback loop: the more content it spits out, the more “training data” it feeds on. That content enters the public domain. New models then train on that content, then churn out more based on patterns they picked up from the previous models.

This is what is known as the ouroboros effect in AI.

Businesses are also starting to get worried that it’s not just a problem of AI content getting a bit dull & repetitive. It’s whether their internal tools, customer-facing systems & even AI-assisted decisions are starting to rely on dodgy information – and no-one even realises it’s happening.

It gets its name from that old symbol: a snake eating its own tail. In AI that describes a cycle where models learn from other models, or even themselves. Until the quality starts to go downhill.

That matters because the issue is no longer theoretical. A paper published in Nature found that models trained on recursively generated data can suffer from model collapse, a process where the model gradually loses important parts of the original data distribution and becomes less accurate, less diverse, and less useful over time.

What Is an AI Feedback Loop?

An AI feedback loop happens when the output of an AI system becomes part of the input for future AI systems.

A simple AI feedback loop looks like this:

- An AI model generates content.

- That content is published, stored, scraped, reused, or added to a dataset.

- A future AI model trains on that content.

- The next model produces more content based on those patterns.

- The cycle repeats.

The risk increases when that loop runs without human review, source labelling, or quality checks.

Not every AI feedback loop is harmful. Some feedback loops are useful when they include human review, high-quality data checks, reinforcement learning, or real-world validation.

For example, a support chatbot that learns from reviewed customer interactions, approved knowledge base updates, and measured satisfaction scores can improve over time. The difference is that the loop is supervised, measured, and grounded in real business feedback.

The problem begins when AI-generated content is reused without clear labels, quality controls, or enough human-created data to balance it.

That is where the AI ouroboros effect can begin to be dangerous.

Instead of learning from real data (human language, behaviour, edge cases, and creativity) the model starts learning from a narrower copy of a copy. Over time, errors can compound which can me that rare examples may disappear, outputs may become flatter, more repetitive, and more confident in weak assumptions.

What Is the Ouroboros Effect in AI?

The ouroboros effect in AI is when AI systems start feeding on the outputs of earlier AI systems. The metaphor matters because it makes a technical risk easy to understand: a system can appear productive while slowly narrowing the quality of the information it learns from.

It is a useful metaphor because the risk is circular. AI does not just produce content for humans anymore. It increasingly produces content that may shape the next generation of AI systems.

For example, a company uses AI to generate hundreds of blog posts. Those posts are published online. Later, a model is trained on web content that includes those posts. That model learns from the previous model’s wording, shortcuts, errors, and patterns. It then creates more content that follows the same structure. Over several rounds, the content can become less original and less connected to real human knowledge.

A similar risk can happen inside your own business. If an internal AI assistant is trained on AI-generated documentation, summaries, or support notes that were never reviewed by a human, the system may begin repeating weak assumptions across future answers. Over time, your employees may trust responses that sound polished but are built on increasingly thin source material.

The same pattern can apply to code, images, product descriptions, medical summaries, financial commentary, customer service scripts, and synthetic datasets.

This does not mean AI-generated content is automatically bad. It means that the risk comes from unfiltered recursive training, especially when synthetic content replaces real-world data instead of supplementing it.

What Is AI Model Collapse?

AI model collapse is the degradation that can happen when models are repeatedly trained on outputs from earlier models.

The Nature paper on model collapse describes it as a degenerative process where generated data pollutes the training set for future models. The researchers found that indiscriminate use of model-generated content in training can cause serious defects, including the loss of less common details from the original data.

Model collapse is not just about a model becoming “worse” in a vague way. It can mean the model gradually loses rare, unusual, or highly specific examples that were present in the original training data. For example:

- A customer support AI may become worse at handling unusual cases and complaints.

- A coding assistant may become weaker with unusual architecture decisions.

- A medical summarization tool may miss rare but important context.

- A search or recommendation system may start returning more generic results.

That creates problems because many high-value business and research use cases depend on exactly those edge cases.

Ouroboros Effect vs. Model Collapse

| Concept | Meaning | Why It Matters |

| AI feedback loop | AI output becomes future AI input | Can be useful or harmful depending on controls |

| Ouroboros effect in AI | A self-consuming AI training cycle | Helps explain the risk in simple terms |

| AI model collapse | Model quality degrades across recursive training cycles | Can reduce accuracy, diversity, and reliability |

| Recursive training | A model is trained on outputs from previous AI systems | Can compound errors or narrow the model’s understanding over time |

| Synthetic data risk | AI-generated data is overused or poorly filtered | Can weaken the training signal over time |

| Data poisoning | Bad or manipulated data harms model behaviour | More security-focused than model collapse |

The ouroboros effect is useful for explaining the big picture. Model collapse gives the concept technical weight.

Why AI Feedback Loops Are Becoming Harder to Avoid

AI feedback loops are becoming more likely because AI-generated content is now part of the internet’s normal content supply.

- Businesses use AI to draft blog posts, product pages, ads, emails, reports, images, documentation, code, and support responses.

- Publishers use AI to summarize news and rewrite existing material.

- Developers use AI assistants to generate code.

- Platforms use AI to moderate, recommend, translate, and personalize content.

This means the training environment for future models is changing.

The public web is no longer made only of human-created material. It now includes a growing amount of machine-generated material. That creates a data-quality challenge for AI labs, search engines, publishers, and businesses using AI in their own systems.

The risk becomes larger when AI-generated content is:

- not labelled

- scraped into training data without filtering

- used to replace human-created examples

- generated at a massive scale

- low quality or repetitive

- disconnected from real-world validation

- reused across multiple model generations

Inside a business, this can show up in quieter ways. An internal knowledge base may be filled with AI-written summaries that no one has verified. A support team may reuse AI-generated responses until weak explanations become standard language. A product team may rely on synthetic test cases that miss unusual customer behaviour. None of these failures looks dramatic at first, but they can slowly reduce the quality of decisions, workflows, and customer experiences.

Synthetic data can still be useful. In fact, many organizations use it to fill gaps, protect privacy, test systems, or train models when real data is limited. But synthetic data needs controls. It should not become a substitute for reality.

Is Synthetic Data Always Bad for AI Training?

The problem is not the synthetic data itself. The problem is poor synthetic data governance.

A separate research paper, Is Model Collapse Inevitable?, found that replacing original real data with synthetic data can lead to collapse, but accumulating synthetic data alongside real data can avoid collapse across several model types and settings.

Synthetic data can be useful when it is used to:

- simulate rare scenarios

- improve privacy

- augment limited datasets

- test edge cases

- support model evaluation

- generate training examples under human supervision

Synthetic data becomes more valuable when teams can measure where it helps, where it introduces distortion, and whether it improves real-world model performance.

It becomes risky when organizations treat it as unlimited free training material with no quality controls.

Examples of AI Model Collapse

AI model collapse can show up in different ways depending on the system.

- Content systems: AI-generated articles become more repetitive because future outputs are based on earlier machine-written content.

- Internal knowledge tools: AI assistants repeat weak summaries because the source material was never reviewed.

- Customer support: Chatbots become less useful for unusual complaints because rare cases are underrepresented.

- Software development: Coding assistants repeat common patterns but become weaker at unusual architecture or security scenarios.

In text models, it may look like repetitive phrasing, weaker reasoning, factual drift, shallow summaries, or the loss of uncommon viewpoints. In image models, it may show up as less visual variety, distorted patterns, or outputs that converge toward generic styles. In code models, it may appear as repeated insecure patterns, weak architecture assumptions, or solutions that look correct but fail under real conditions.

Model Collapse vs. AI Data Poisoning

AI model collapse and AI data poisoning both involve training data going bad, but they are not the same thing.

Model collapse is usually about recursive degradation. The system weakens because it keeps learning from synthetic outputs that have lost information from the original data.

Data poisoning is usually about manipulation. An attacker or bad actor inserts harmful, misleading, or biased data into a training set to influence model behaviour.

NIST’s guidance on adversarial machine learning describes risks that include attacks designed to manipulate training data or model behaviour.

| Risk | Main Cause | Example |

| Model collapse | Too much recursive AI-generated training data | A model becomes generic after training on AI-written articles |

| Data poisoning | Malicious or poor-quality data injected into training | A model is manipulated to associate a brand with false claims |

| Synthetic data contamination | AI-generated data enters datasets without labelling | Future models train on unknown machine-made content |

| Feedback-loop degradation | AI systems reinforce their own weak patterns | Outputs become more repetitive across generations |

AI quality depends on data quality. Whether the issue is synthetic data, poisoned data, stale data, biased data, or poorly labelled data, the result is the same. The system becomes less trustworthy.

Why This Matters for Businesses

The ouroboros effect affects any business using AI for content, customer support, software development, research, data analysis, internal knowledge management, or decision support.

If your business uses AI tools regularly without understanding where the data comes from, how outputs are reviewed, or how synthetic material is handled, you may end up with systems that sound useful but gradually weaken your information quality over time.

| Business Area | Feedback-Loop Risk | Practical Consequence |

| Marketing | AI-generated content trains future content patterns | More generic messaging and weaker originality |

| Customer Support | AI responses are reused without review | Incorrect or shallow answers become standard |

| Software Development | AI-generated code patterns are repeated | Technical debt or security issues become harder to spot |

| Internal Knowledge | AI summaries replace verified documentation | Employees rely on weaker source material |

| Executive Reporting | AI-generated summaries shape decisions | Leaders act on incomplete or circular information |

The risk is not that AI stops working overnight. The bigger risk is quiet degradation. The answers become more generic. The summaries lose nuance. The recommendations become circular. The model repeats familiar patterns instead of finding stronger ones.

EspioLabs supports businesses in Ottawa and across Canada with AI solutions that help turn these ideas into usable systems, from workflow automation to applied AI product development. Learn more about our AI services.

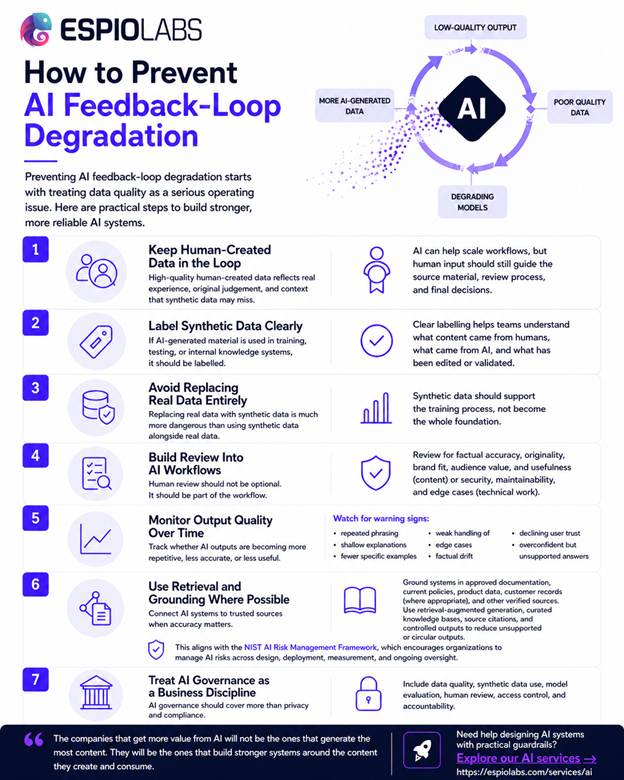

How to Prevent AI Feedback-Loop Degradation

Preventing AI feedback-loop degradation starts with treating data quality as a serious operating issue.

Businesses should not rely on AI output simply because it sounds polished. They need a process for reviewing, labelling, validating, and improving the information that enters AI systems.

1. Keep Human-Created Data in the Loop

High-quality human-created data remains valuable because it reflects real experience, original judgment, and context that synthetic data may miss.

AI can help scale workflows, but human input should still guide the source material, review process, and final decisions.

2. Label Synthetic Data Clearly

If AI-generated material is used in training, testing, or internal knowledge systems, it should be labelled.

Clear labelling helps teams understand what content came from humans, what came from AI, and what has been edited or validated.

3. Avoid Replacing Real Data Entirely

The research on whether model collapse is inevitable suggests that replacing real data with synthetic data is much more dangerous than using synthetic data alongside real data.

That means synthetic data should support the training process, not become the whole foundation.

4. Build Review Into AI Workflows

Human review should not be treated as an optional final step. It should be part of the workflow.

For example, businesses using AI for content should review for factual accuracy, originality, brand fit, audience value, and usefulness. Businesses using AI for technical work should review for security, maintainability, and edge cases.

5. Monitor Output Quality Over Time

Teams should track whether AI outputs are becoming more repetitive, less accurate, or less useful.

Warning signs may include:

- repeated phrasing

- shallow explanations

- fewer specific examples

- weak handling of edge cases

- factual drift

- declining user trust

- overconfident but unsupported answers

6. Use Retrieval and Grounding Where Possible

For business use cases, AI systems should be connected to trusted sources when accuracy matters.

Retrieval-augmented generation, curated knowledge bases, source citations, and controlled datasets can help reduce unsupported or circular outputs. This aligns with the NIST AI Risk Management Framework, which encourages organizations to manage AI risks across design, deployment, measurement, and ongoing oversight.

For teams building internal AI tools, grounding is where strategy becomes execution. EspioLabs supports businesses with AI solutions that connect models to trusted data, workflows, and governance from the start.

7. Treat AI Governance as a Business Discipline

AI governance should cover more than privacy and compliance. It should include data quality, synthetic data use, model evaluation, human review, access control, and accountability.

The companies that get more value from AI will not be the ones that generate the most content. They will be the ones that build stronger systems around the content they create and consume.

This is also why AI implementation should not be treated as a tool-selection exercise. The stronger question is how the system will use data, where human review belongs, and how quality will be measured over time.

For businesses building AI into internal operations, customer experiences, or software products, EspioLabs helps design AI systems with practical guardrails from the start. Explore our AI services to see how this type of work can be approached with more structure.

Is Model Collapse Inevitable?

Model collapse is not necessarily inevitable, but it is a real risk.

The strongest research takeaway is that recursive AI training becomes dangerous when synthetic outputs replace high-quality real data. However, research on model collapse suggests the risk can be reduced when synthetic data is accumulated with real data, properly managed, and tested.

That gives businesses a more practical way to think about the issue.

Build AI Systems That Do Not Feed on Weak Data

The ouroboros effect is a useful warning for any organization adopting AI. AI systems need trusted data, clear governance, human review, and practical workflows that prevent recycled outputs from becoming the foundation for future decisions.

If your organization is exploring AI implementation, contact EspioLabs today to discuss how we can help you plan, design, and build AI solutions that stay grounded in real business needs.

FAQs About AI Feedback Loops and the Ouroboros Effect

Businesses can reduce the risk of AI model collapse by keeping high-quality human-reviewed data in the loop, labelling synthetic data, validating AI outputs, monitoring performance over time, and grounding AI systems in trusted sources instead of relying only on generated content.

Yes, AI can train on AI-generated data, but the process needs strong controls. Research on synthetic and real data accumulation suggests that synthetic data is less risky when it is added alongside real data rather than used to replace it.

Model collapse usually happens through recursive degradation when AI models train on AI-generated outputs. Data poisoning happens when harmful or misleading data is deliberately or accidentally introduced into a training set.

Synthetic data is not automatically bad. It can help with privacy, testing, and rare-case simulation. The risk comes from relying on synthetic data without enough real data, labelling, validation, and quality control

AI model collapse is the degradation that can happen when generative models are repeatedly trained on recursively generated data.

An AI feedback loop happens when AI-generated output becomes input for future AI systems.

The ouroboros effect in AI is a self-consuming feedback loop where AI systems train on content produced by other AI systems. Over time, this can reduce quality, diversity, and accuracy.