What Is Data Modernization and Why Does It Matter for AI Adoption?

By Simon Kadota • May 21, 2026

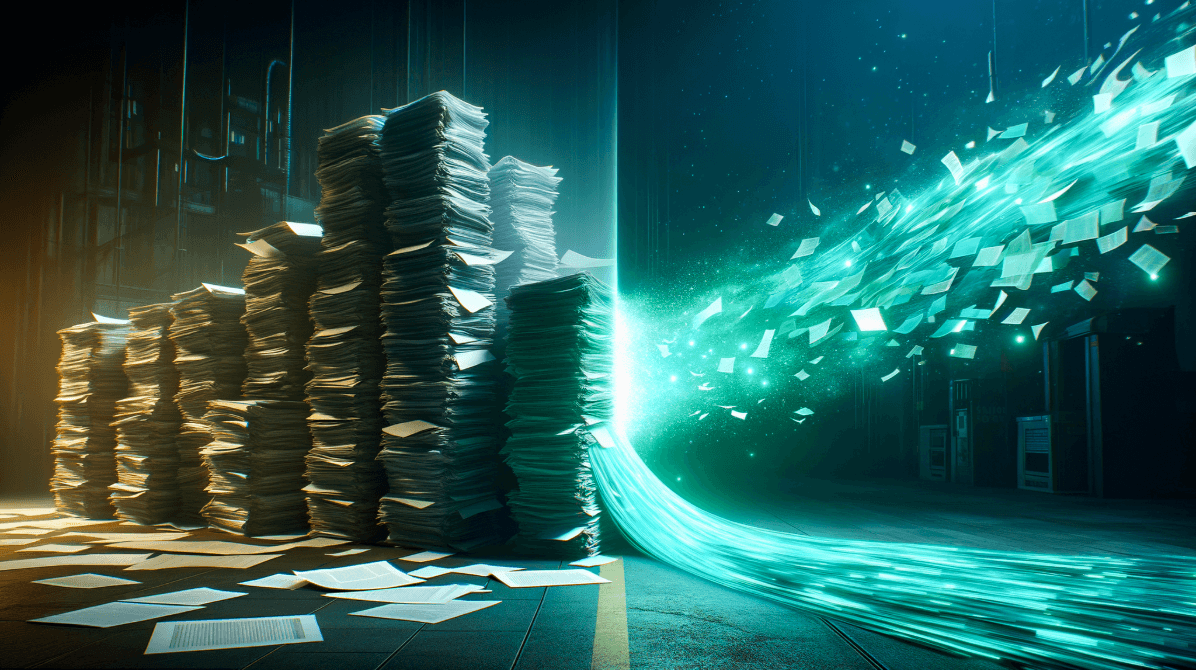

Most businesses today are interested in AI, but their data is not always ready to support it. Customer records may live in one platform, sales notes in another, documents in shared drives, reports in ...